I love the concept of Hyperconvergence, who doesn’t? An IT infrastructure built out of relatively balanced (and small) nodes contributing all together to a large pool of computing and storage resources, which can linearly scale just by adding more nodes.

I love the concept of Hyperconvergence, who doesn’t? An IT infrastructure built out of relatively balanced (and small) nodes contributing all together to a large pool of computing and storage resources, which can linearly scale just by adding more nodes.

This kind of infrastructure, thanks to the latest advancements in software, has become very easy to manage and could be the answer to many different types of workloads… but, as I’ve already written about it in the past, not all of them. Sometimes, due to particular compute or storage needs it just doesn’t work out!

Also market leaders, like Nutanix for example, are aware of this issue and will have specialized products in the future (in this case it will be a scale-out storage solution based on the same technology at the base of their distributed storage layer but optimized for large capacities).

“On average” workloads are well served

![]() The number of solutions out there is impressive, and new hyperconverged players are popping up on a weekly basis! Fortunately, from the end user point of view, most of them are already positioned in specific market segments.

The number of solutions out there is impressive, and new hyperconverged players are popping up on a weekly basis! Fortunately, from the end user point of view, most of them are already positioned in specific market segments.

It’s not just only pricing, product positioning is often determined by specific functionalities and the most common discriminator is hypervisor support. For example, KVM-only solutions are usually targeted to the low end market while VMware-only or multiple hypervisor support is much more common in higher end products. And the higher you go, the more you get… in fact, support for OpenStack is quickly becoming another must-have feature for the large enterprise.

But still, even the most powerful and feature rich solution targets traditional enterprise workloads leaving out the very high end needs and verticals, like HPC or Big Data analytics.

When “on average” is not enough

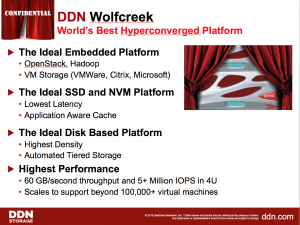

Last week DDN launched its Wolfcreeck. It’s a beast capable of a tremendous amount of IOPS and throughput. The solution will be targeting Enterprise HPC, big data workloads and classic VM workloads as well.

Last week DDN launched its Wolfcreeck. It’s a beast capable of a tremendous amount of IOPS and throughput. The solution will be targeting Enterprise HPC, big data workloads and classic VM workloads as well.

Let me say that I’m skeptical about the potential success in the last category as much as I’m very sure that in the first two DDN has a huge potential!

DDN is positioning itself in the very high end enterprise, which is clearly within its comfort zone and also where it has only one competitor today (HDS with HSP and HCP platforms). I’m not saying that others (like EMC for example) don’t have something to say… but, at the moment, these are the only two having pre-packaged end-to-end solutions.

The differentiator

These appliances show massive horsepower and they can easily handle Big Data and other data intensive workloads. Thanks to OpenStack support they can also become a great tool to quickly provision resources to different departments, manage load peaks and scale very quickly when needed.

These appliances show massive horsepower and they can easily handle Big Data and other data intensive workloads. Thanks to OpenStack support they can also become a great tool to quickly provision resources to different departments, manage load peaks and scale very quickly when needed.

Furthermore, it won’t be a difficult task for OpenStack APIs to develop specific applications while integration with respective object storage environments makes easier and cost effective managing vast amounts of data in a tiered fashion!

Closing the circle

DDN has done a great job with Wolfcreeck and its multi-tier storage strategy. This small, but really successful, storage company has come up with a great product line-up and is moving very quickly from being a niche player (in HPC) to a much more mature and large/hyper-scale enterprise vendor.

DDN has done a great job with Wolfcreeck and its multi-tier storage strategy. This small, but really successful, storage company has come up with a great product line-up and is moving very quickly from being a niche player (in HPC) to a much more mature and large/hyper-scale enterprise vendor.

At the moment DDN and HDS have the most interesting vision when it comes to this kind of problematic. Hard to compare at times, but both of them have culture, consulting services and really compelling integrated hyper-converged solutions to solve major high end needs and specific verticals (especially Big Data). If it is true that hyperconvergence has a role in the process of simplifying traditional infrastructures, it can be even more true for enterprise HPC/Big Data deployments!

Two vendors have announced solutions in this space in a 3 month timeframe… I’m pretty sure we will see a few more before the year is up.